HFT Engine Latency - Part 6: Custom WebSocket

Zero Allocation WebSocket

Introduction

In an earlier article on latency optimisation of the Apex open source trading engine, we examined the performance impact of replacing the JSON parser, moving from JSON for Modern C++ to simdjson. That change gave a significant improvement on the inbound pathway.

However, the investigation ended with an unresolved issue: a substantial number of heap allocations still occur during market data handling. Profiling traced these allocations to WebSocket++, the library used by Apex to handle the WebSocket protocol.

To address these remaining allocations, we next replace WebSocket++ with a custom-built protocol handler designed to perform zero allocations. The impact of this change is then measured to determine the additional latency benefit.

The Problem

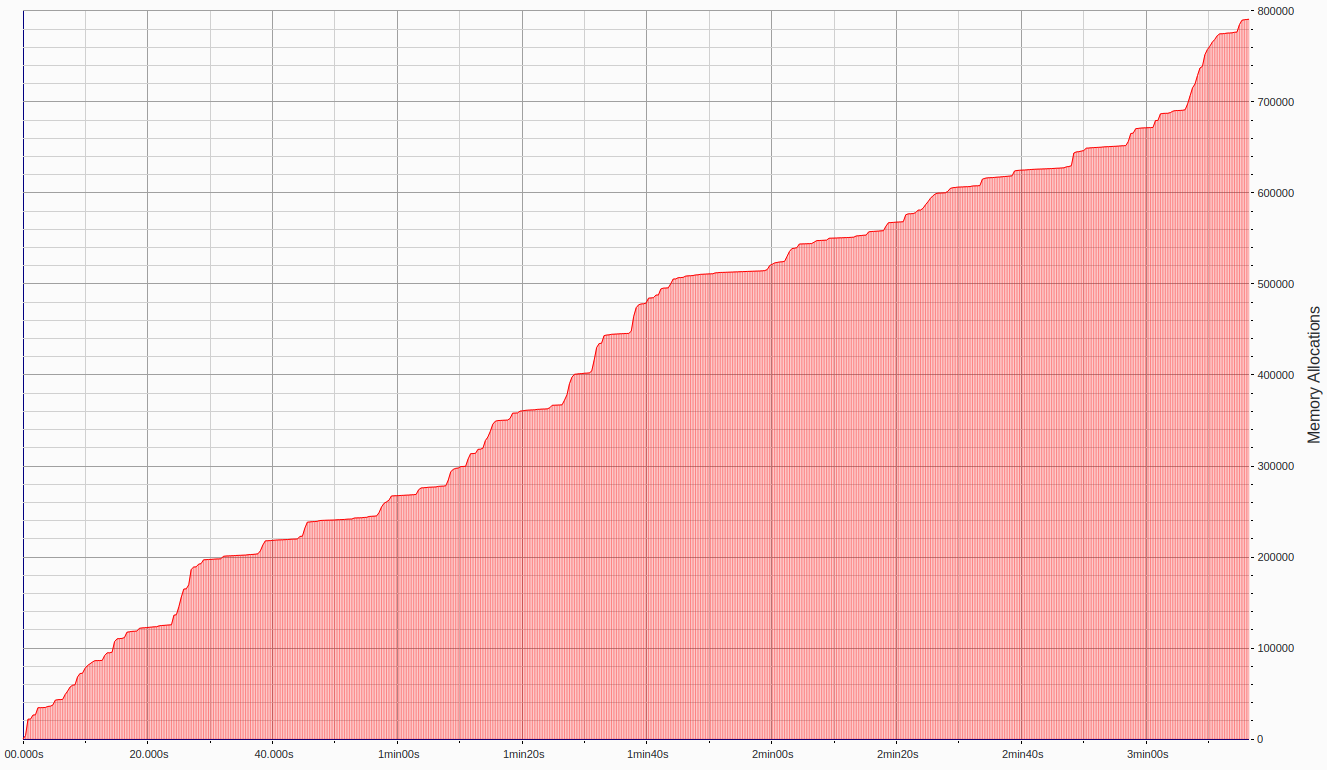

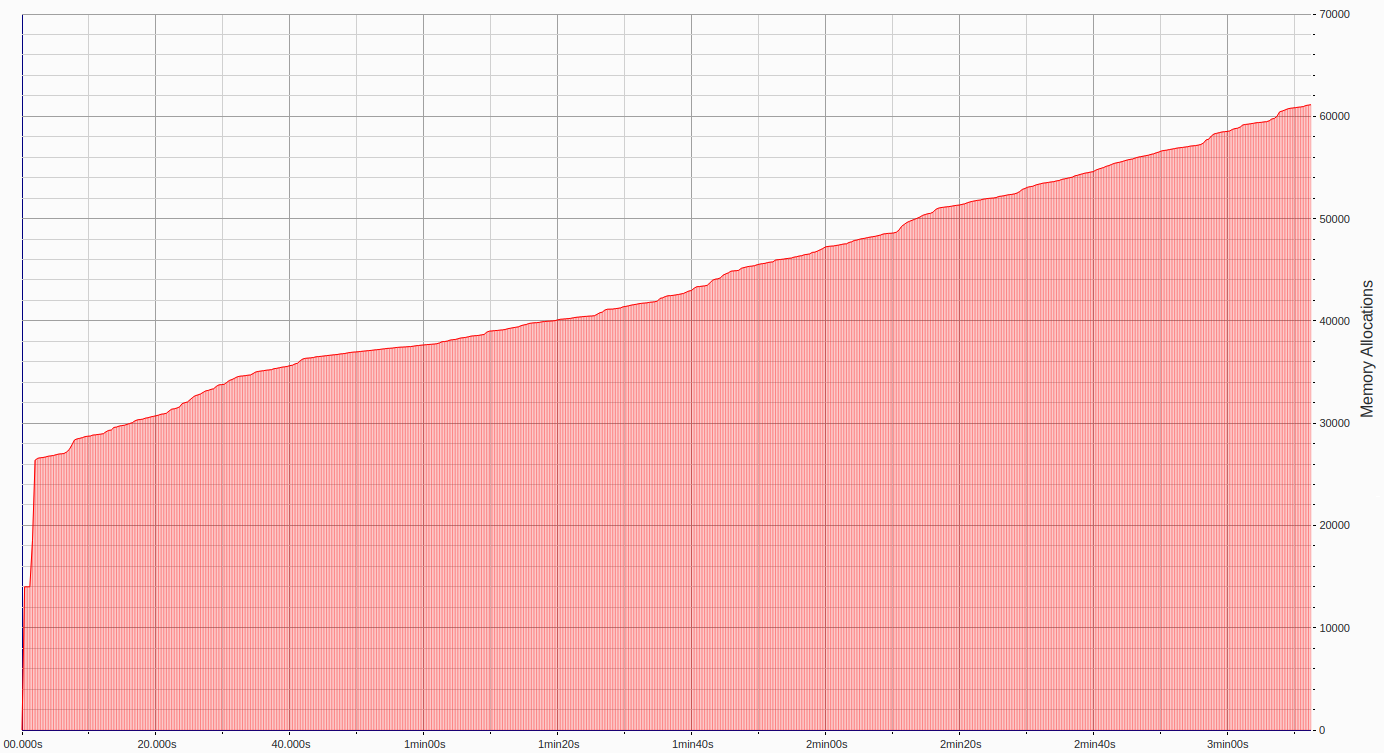

The graph below shows the heap allocations that remain after Apex was migrated to simdjson. The key observation is that allocations steadily increase over the three-minute run, indicating that they are driven by incoming messages rather than by transient spikes.

Profiling traced these allocations to the library used by Apex to handle the WebSocket protocol: WebSocket++.

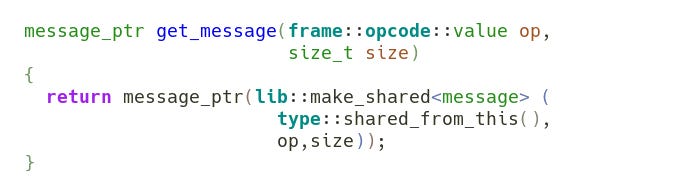

When processing raw bytes, WebSocket++ makes a pair of nested calls. The first, to a function named get_message, creates a new message object, which is used to later accumulate inbound data.

This function invokes the message constructor, during which the std::string member variable m_payload reserves sufficient capacity to hold the incoming message.

The code therefore shows that at least two heap allocations may occur per inbound message: one for the shared_ptr managing the message object’s lifetime, and a second for the internal buffer of the std::string.

These allocations are not bugs; they simply reflect WebSocket++’s design. The library is a fully featured, general-purpose WebSocket implementation supporting multiple protocol versions, fragmentation, large message sizes, compression and flexible usage patterns.

Heap allocation in this context is unsurprising and uncontroversial, and poses no concern for general application use. It is driven by the need to accommodate the possibility of arbitrarily large messages being sent over fragmented frames.

In HFT however, where we need to process thousands of messages per second, even the small number of 2 allocations per message can add up to become significant. Each allocation incurs a cost, introduces non-determinism, and - importantly - can be avoided with a carefully designed zero allocation approach.

Custom WebSocket

The immediate aim, then, is how to achieve zero-allocation WebSocket parsing.

In general there are two ways to address such a challenge. One is to modify the existing library to add the desired behaviour & performance. The other is to replace it, either with an alternative third-party library or with a custom-built solution.

Given that WebSocket parsing is not inherently complex - its core just comprises header parsing, masking and message framing - implementing a minimal handler tailored to Apex’s needs is a practical option. By contrast, altering WebSocket++ to change its allocation model would likely be a far more involved task.

So the approach taken was to build a custom WebSocket handler. Developing low-level protocol implementations is a common requirement in high-frequency trading systems, where developers routinely implement proprietary binary exchange protocols for both market data consumption and order entry.

The primary design goal for Apex’s WebSocket parser was to eliminate heap allocations. Whereas WebSocket++ required allocations in order to receive large messages sizes (which is necessary for a general purpose library), Apex will only support a maximum message size. This allows for a fixed-size buffer to be used for accumulating incoming bytes until a complete WebSocket message can be reconstructed. No dynamic resizing occurs, and therefore no heap allocations are required.

This choice introduces an explicit trade-off: Apex does not support arbitrarily large messages. The benefit is deterministic memory usage and zero allocation. Moving away from general-purpose flexibility in favour of limited behaviour with improved performance is a common theme in low-latency system design.

Will this constraint ever be a problem if we suddenly start to receive huge messages? Well in practice, market data messages are typically small, with only full-depth snapshots reaching moderate size. Message sizes can be monitored to ensure they remain comfortably within the configured buffer limit.

Performance

As in earlier articles, we examine the performance impact of this change through two experiments: a simulated high-load scenario subscribing to four symbols, and a light-load scenario subscribing to a single symbol.

The system used is a 3.40 GHz Intel Core i7-6700 CPU, with C-states and P-states configured for maximum performance (described previously here).

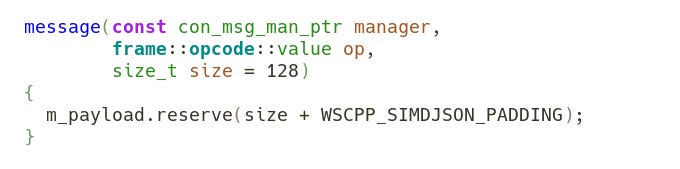

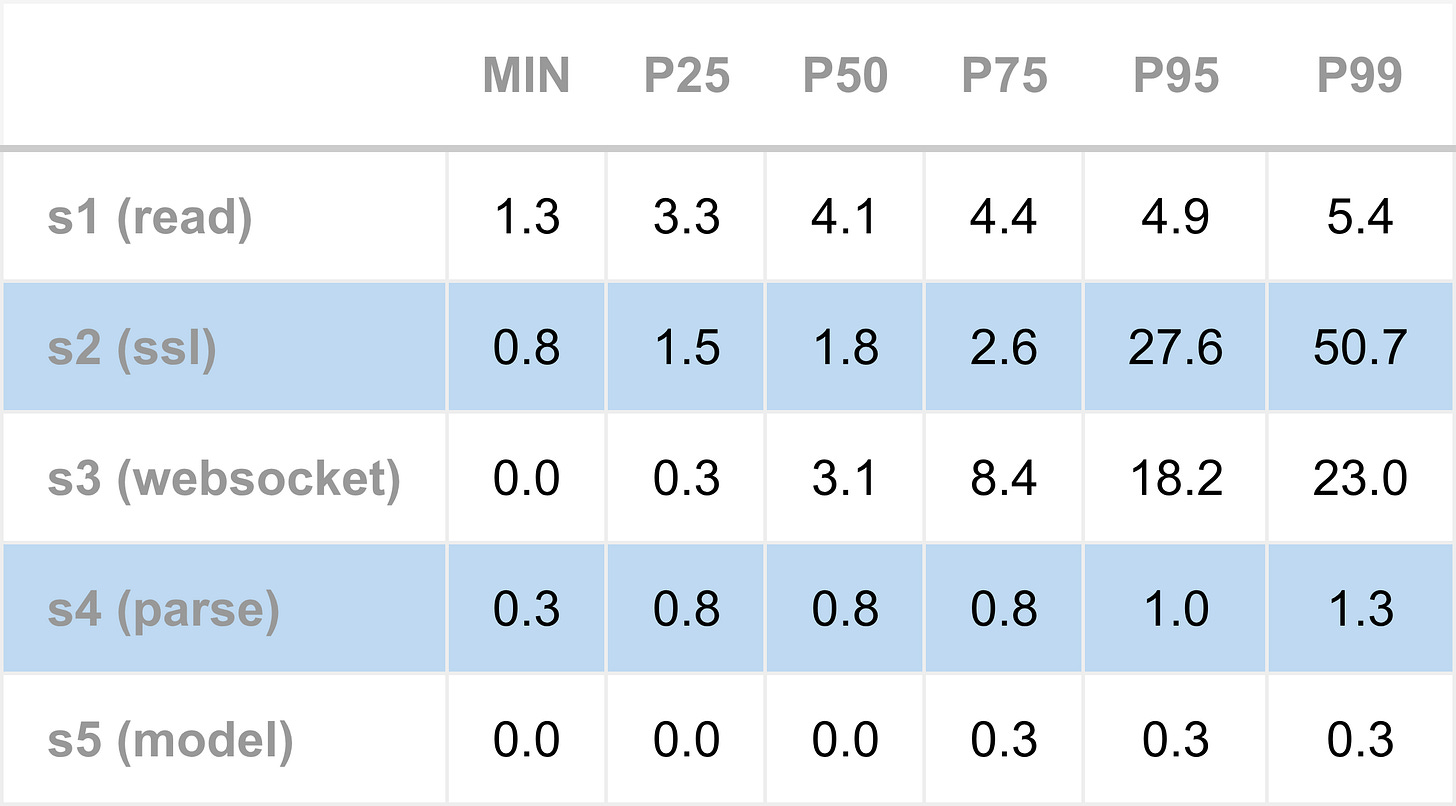

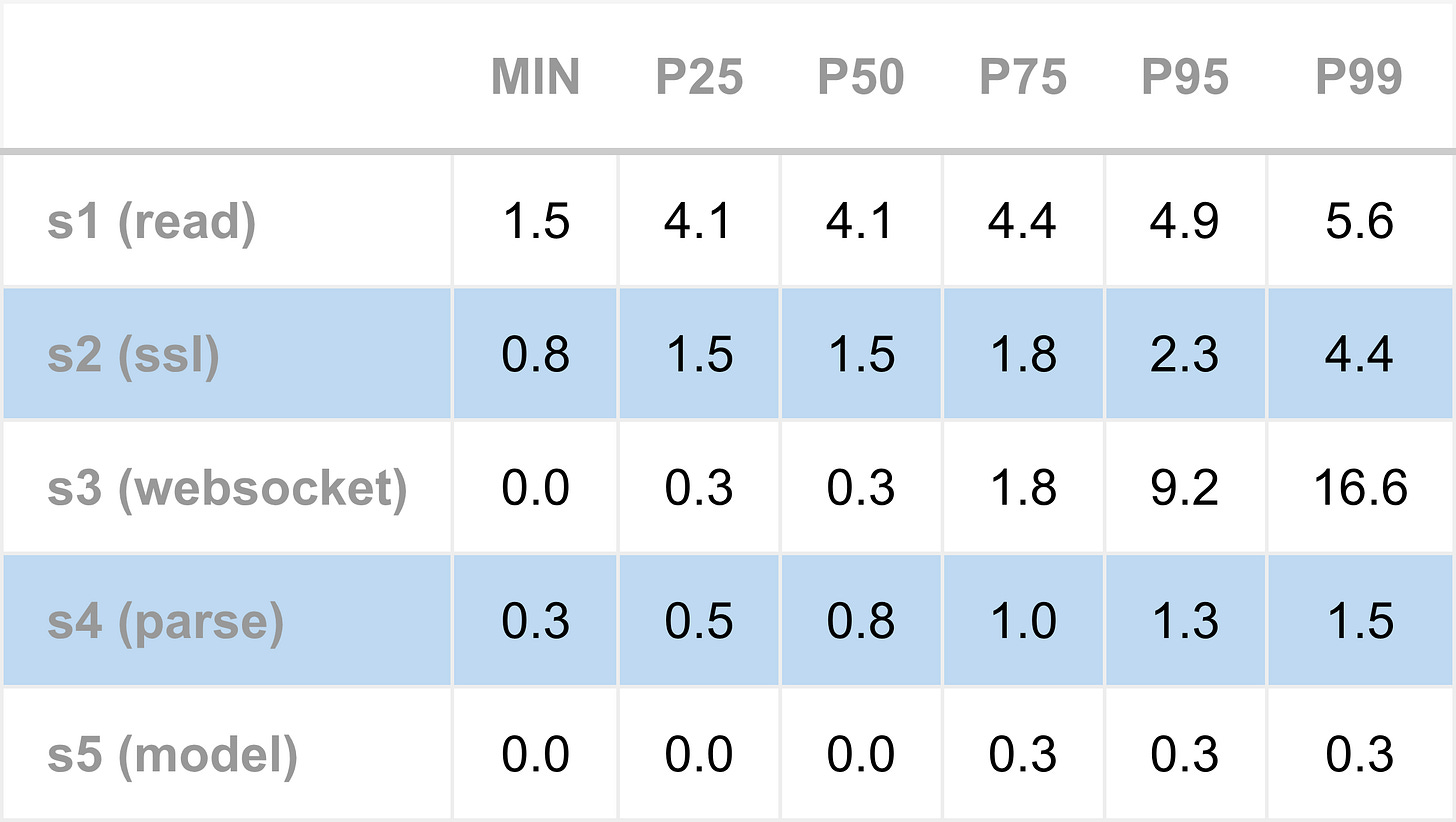

The first table shows the results for the four-symbol scenario. The 25th percentile measures 0.3 microseconds for the WebSocket stage, but higher percentiles (P50 and above) rise sharply. This reflects queuing effects that naturally occur with high message load: the measured times include not only the per-message processing time in the code, but also how long messages wait in the fixed buffer until it’s their turn to be decoded.

The next table shows the single-symbol results. Both P25 and P50 measure 0.3 microseconds for WebSocket parsing, indicating this is essentially the baseline processing time for a single message. Higher percentiles still rise due to queuing, but now much less severely than in the multi-symbol scenario.

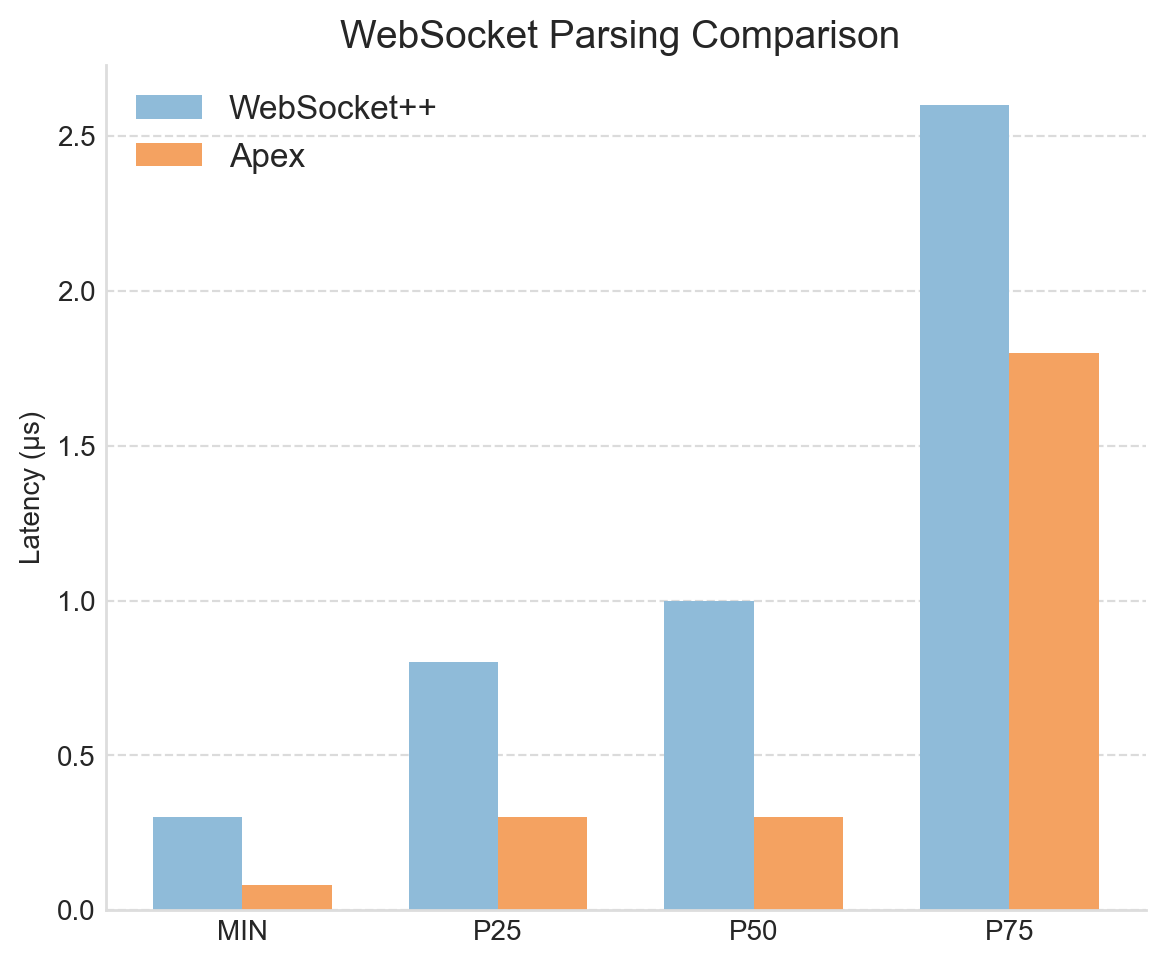

To examine the improvement over the previous approach, the following chart compares WebSocket++ to Apex for the WebSocket parsing stage. For the P25 & P50 percentiles, we see a significant improvement of approximately 0.5 microseconds, a clear win that also persists into higher percentiles.

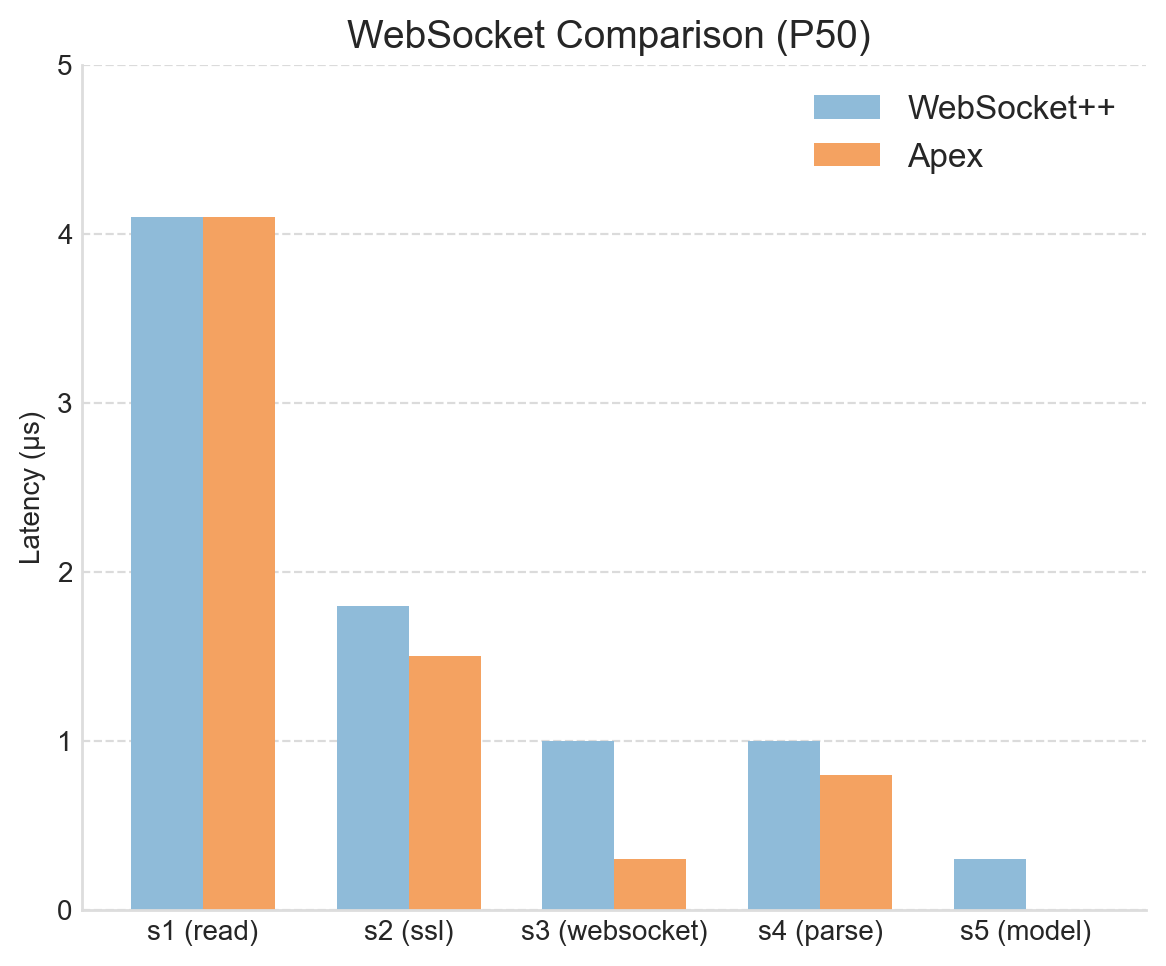

In the final chart we compare the entire tick-to-model latency, focusing on the P50 percentile, which represents typical performance. This confirms that the new parser introduces no adverse side effects. In fact, there are indications that reduced memory usage has slightly improved adjacent stages, such as SSL decryption and JSON parsing.

It’s worth noting that the WebSocket stage minimum latency is consistently lower than the P25 and P50 measurements, at under 0.1 microseconds. This minimum number represents a “best-case” scenario: messages happen to arrive when the thread is ready, buffers are empty, and all relevant instructions and data are already in the CPU cache. While interesting, these minimum values are outliers and not representative of typical processing. The P50 and higher percentiles provide a more meaningful view of the system’s normal performance, including the small queuing delays that naturally occur even under light load.

Still memory problems?

The primary aim of replacing WebSocket++ was to eliminate heap allocations when processing market data. While the performance improvements are clear, it’s important to confirm that allocations were actually reduced.

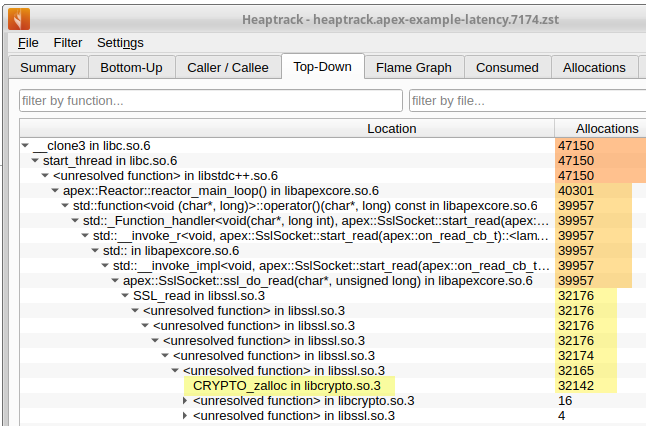

The excellent profiling tool Heaptrack was again used for this purpose, capturing metrics for a short run of Apex. The resulting data, presented next, makes it immediately obvious that heap allocations continue to occur.

This initially feels disappointing - the critical inbound path has not been completely freed from allocations. Perhaps the change didn’t fully work? Further digging is required to understand what is going on.

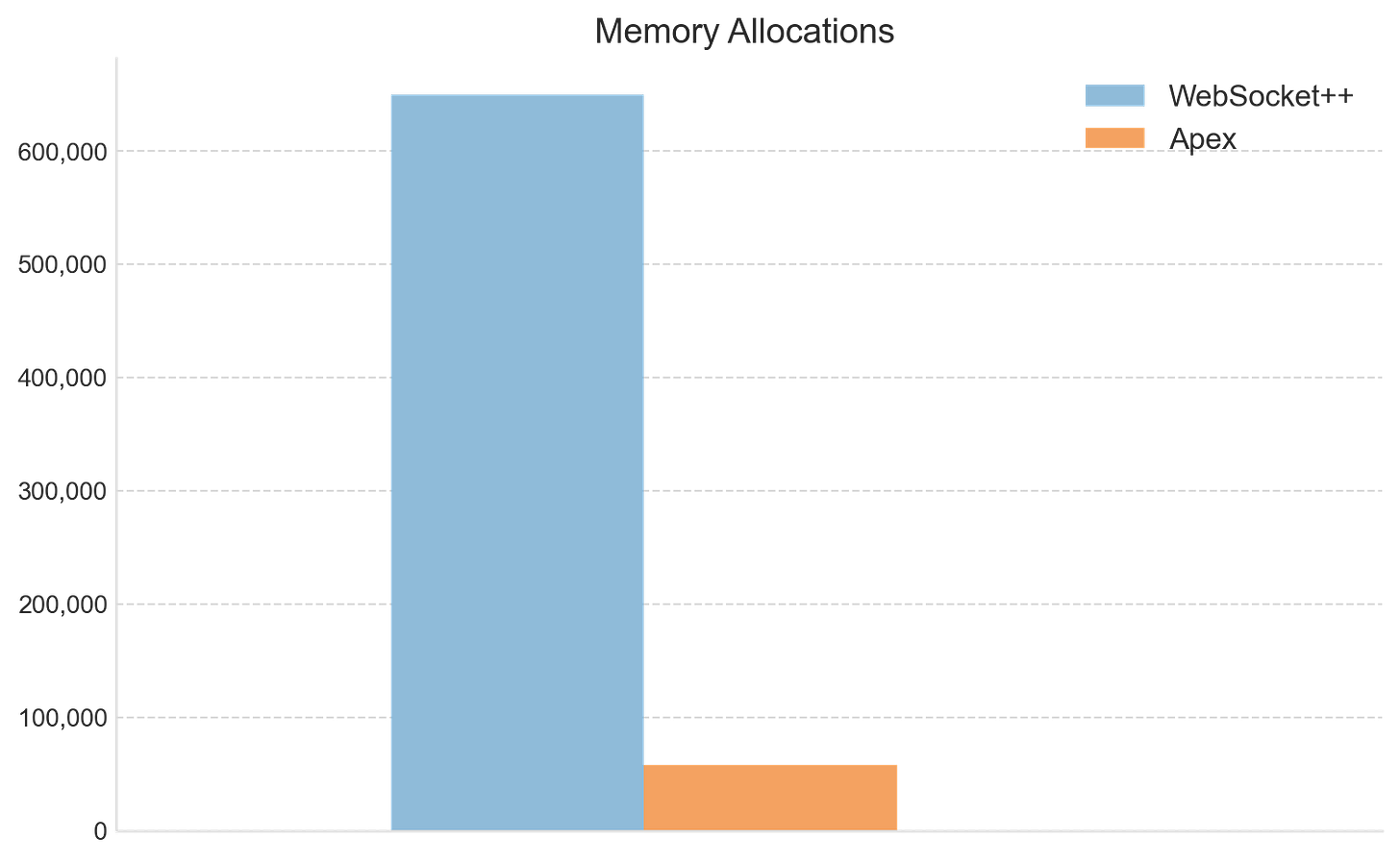

First we can quantify exactly how many heap allocations remain, and compare with before. That comparison is shown next. The difference is stark: allocations have reduced dramatically, confirming the code change had the desired effect. The 4,000 allocations per-second has come down to around 310 per-second, a ten-fold improvement.

So what’s causing these remaining allocations? Heaptrack’s top-down view provides the answer. The majority occur in the function CRYPTO_zalloc, part of OpenSSL, the library Apex uses to handle Transport Layer Security (TLS) and Secure Sockets Layer (SSL) protocols. These allocations are unrelated to message parsing; they arise from cryptographic operations in the secure transport layer, which is required because cryptocurrency exchanges typically distribute market data over secure WebSocket connections.

Summary

This article has described a further latency improvement to Apex, this time in how it handles the WebSocket protocol.

Apex previously used the general-purpose WebSocket library WebSocket++. While a capable and highly featured implementation, it is designed for general application use and therefore, unsurprisingly, performs dynamic memory allocations when parsing market data.

This has now been replaced with a custom WebSocket protocol handler designed to operate with zero heap allocations. This was achieved by enforcing a maximum supported message size, an acceptable constraint for HFT applications.

Performance comparisons confirm the benefit. Protocol parsing improved by approximately 0.5 microseconds. Small in absolute terms, but highly significant in low-latency trading systems that target tick-to-trade latency of single digit microseconds. Profiling also showed that allocations dropped dramatically, from roughly 4,000 per second to now under 400 per second.

A broader lesson here is that for HFT systems, general-purpose solutions can often be a liability. Libraries such as WebSocket++ often aim for flexibility and completeness, not determinism and low-latency. In latency-sensitive systems, general functionality is often traded for low & predictable performance.

We have seen that heap allocations still occur on the inbound pathway. Profiling shows that these mostly originate in the OpenSSL library. These allocations arise from SSL/TLS processing, which is required because cryptocurrency exchanges distribute market data over secure WebSocket.

Although the remaining allocation rate is far lower than before, further reductions may still yield modest latency improvements, not only by avoiding the time taken on allocations, but also as reduced memory churn can positively affect adjacent stages of the pipeline. However, replacing OpenSSL with a custom SSL/TLS implementation is not a practical option, so alternative strategies will need to be explored.

The Apex WebSocket implementation will also require extension. One important protocol feature not yet supported is fragmentation, which is the splitting and recombination of logical messages across multiple frames. While fragmentation is unlikely to be used by cryptocurrency exchanges, it should nonetheless be supported. When implemented, we will see how the design will incorporate a fast path for short messages (the overwhelming majority of cases) and a slower path for fragmented data.

With the current and previous improvements in place, Apex’s internal tick-to-model latency, for a single-symbol deployment, is now just under 7 microseconds at the median (with an average of just under 9 microseconds). This includes SSL/TLS decryption, WebSocket processing, and JSON parsing.

We can imagine how Apex might perform if connected to an equity exchange. Such a deployment would use UDP instead of TCP, a binary protocol instead of JSON, and no encryption. In such a scenario it is reasonable to expect that the latency would fall to around 5 microseconds. At that point, the remaining latency would largely consist of socket I/O. With kernel bypass, that would also be significantly reduced, leading to the possibility of sub 5 microseconds total tick-to-trade. These are just some of the topics we will no-doubt cover in later articles.